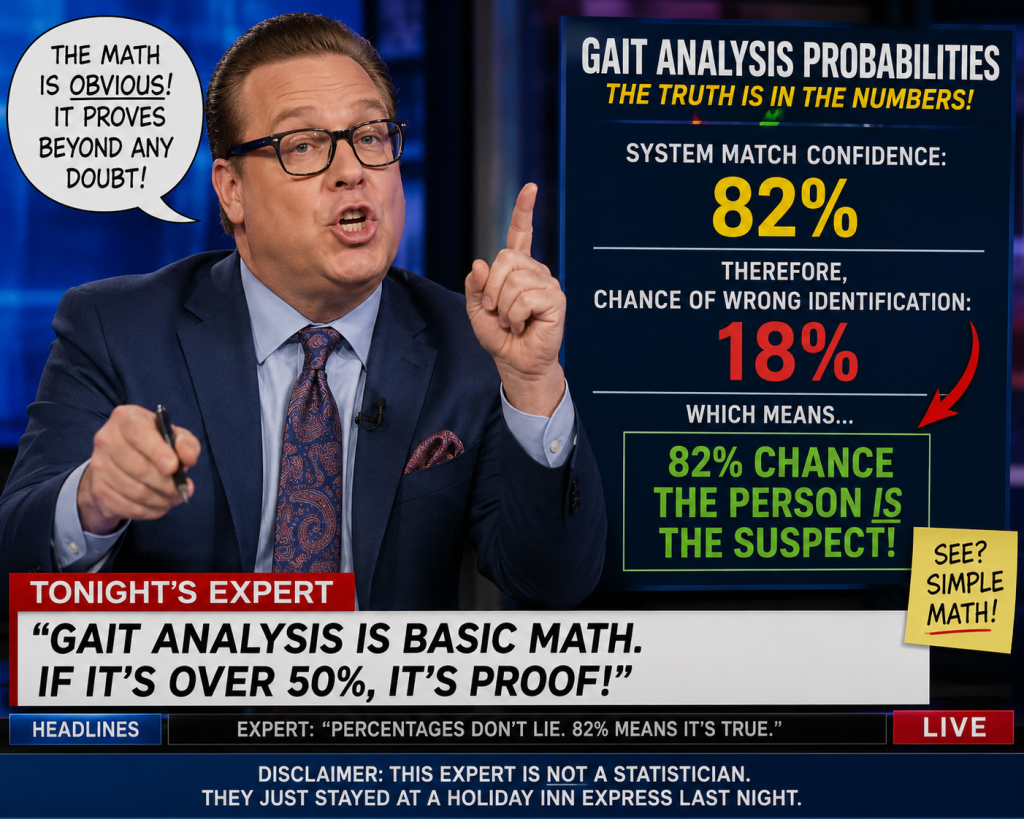

A recent segment on a cable news channel featured a financial commentator enthusiastically discussing an individual who was identified using “gait analysis” — the use of artificial intelligence to identify people based on the way they walk. The commentator highlighted the result that the technology could identify individuals with 94% accuracy, presenting the figure as if it were close to absolute certainty. But that kind of statistic is easy to misunderstand, and the way it’s often discussed can lead people to draw conclusions the number simply does not support.

A “94% probability of identifying someone using gait analysis” sounds incredibly impressive at first glance. But it’s easy to accidentally draw the wrong conclusion from that number — especially if people start talking about the “remaining 6%” of a population like Washington, D.C..

Here’s the key idea: a 94% identification rate does not mean that 94% of people are identifiable and 6% are somehow permanently anonymous, invisible, or fundamentally different. That’s the logic leap, and it’s incorrect.

Think about what the statistic is actually describing. In most cases, a “94% probability” means that when the system was tested, it correctly identified the right person 94 times out of 100 under certain conditions. It’s a statement about the performance of the system across attempts, not a statement dividing humanity into two fixed groups.

A simple analogy helps. Suppose a facial recognition system correctly unlocks your phone 94% of the time. That doesn’t mean 6% of your face is unrecognizable, or that 6% of people can never unlock their phones. It means the system sometimes makes mistakes. Maybe the lighting is bad, the angle is awkward, the camera quality is poor, or the algorithm gets confused.

Gait analysis works the same way. The system observes how people walk and tries to match that walking pattern to known individuals. A 94% success rate means that under the testing conditions, the algorithm got the right answer most of the time. The remaining 6% represents errors or uncertainty in the identification process, not a separate class of people.

That distinction matters because probabilities describe outcomes over repeated events, not hidden truths about individuals.

Imagine applying the system to 100,000 people in Washington, D.C. A mistaken interpretation would say: “94,000 people can be identified, while 6,000 cannot.” But that’s not what the statistic implies. In reality, any given person might be correctly identified in one recording and misidentified in another. The errors are distributed across situations and attempts, not assigned permanently to a fixed minority.

The 6% could arise for many reasons:

- Someone was wearing bulky clothing

- The camera angle was poor

- The person was injured or walking differently that day

- Two people had similar gait patterns

- The training data was incomplete

- Environmental conditions interfered

So the “6%” is really a measure of the system’s limitations and uncertainty under testing conditions.

Another important issue is base rates. Even a highly accurate system can produce misleading results when applied to large populations. If the system scans millions of people, even a small error rate can create thousands of false matches. That’s why statisticians and scientists are careful about interpreting probabilities. Accuracy numbers don’t automatically translate into certainty about individuals or populations.

The correct interpretation of a 94% probability is something like this:

“Given the conditions of the study, the gait analysis system correctly identified individuals about 94% of the time.”

That’s all it says.

It does not say:

- 94% of people are uniquely trackable forever

- 6% of people are immune to identification

- The population can be divided into identifiable and unidentifiable groups

The mistake comes from turning a probability about system performance into a claim about fixed characteristics of people. Those are completely different kinds of statements.

—

Please read our disclaimer on on home page.